News

Google shows off its new text-to-image AI

- May 25, 2022

- Updated: July 2, 2025 at 3:43 AM

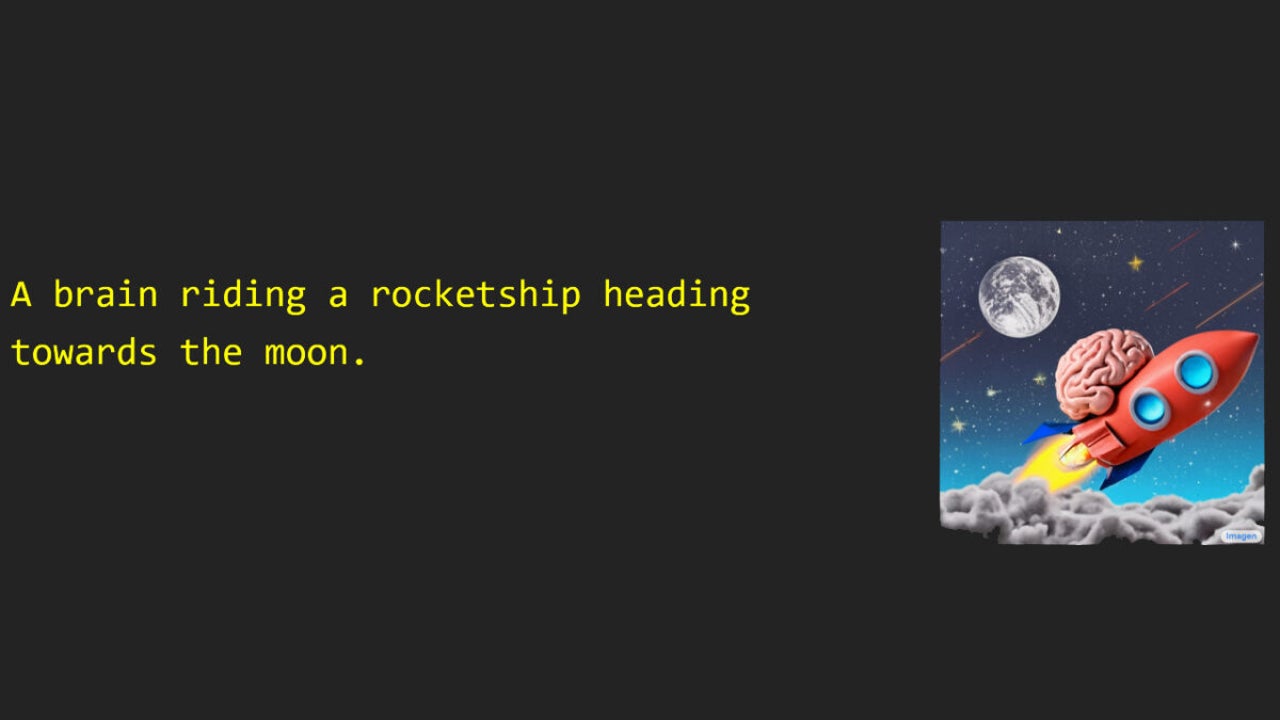

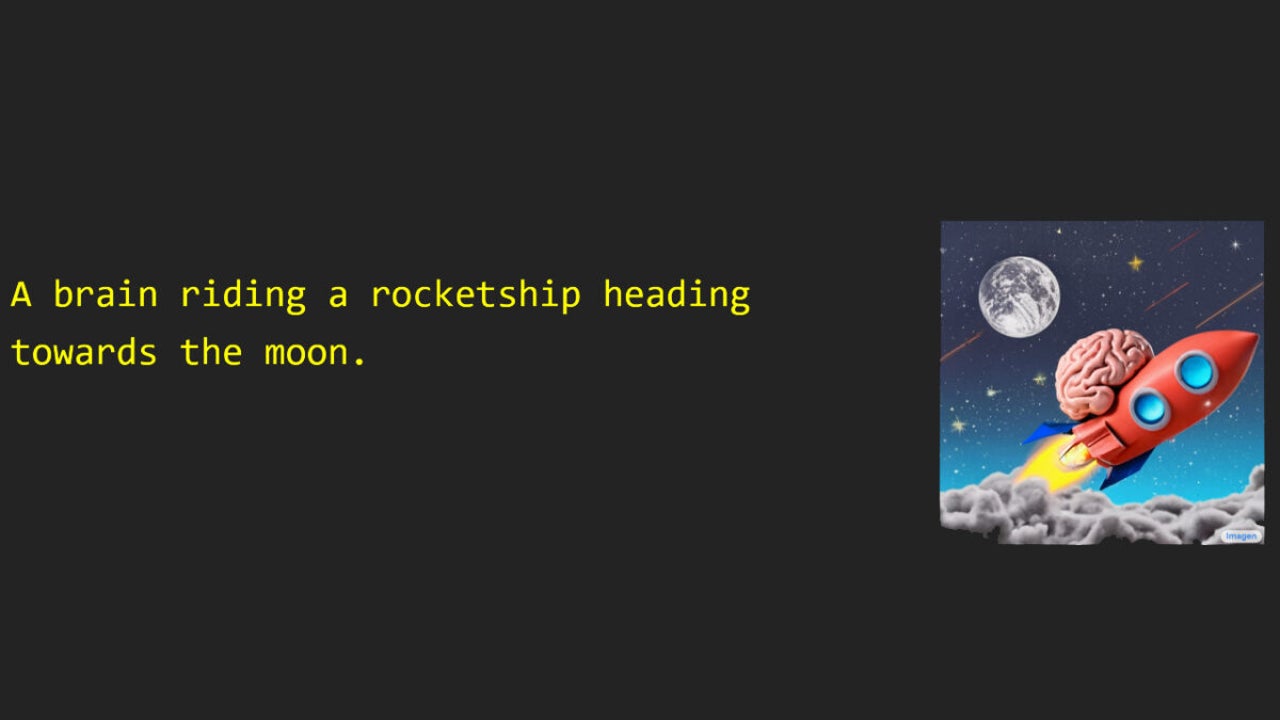

AI is very interesting and offers lots of possibilities. You design an algorithm and train it with lots of data to create a tool that works a bit like a box. You put something into the box, say like a prompt or an instruction, and then something comes out the other side that is based on the prompt but also on all of the data and training that created the tool in the first place. This is particularly the case with AI text-to-image generators and Google has just released details of Imagen, its very own text-to-image generator.

Basically, all you have to do with Imagen is type out a prompt that describes the image you want it to create and, hey presto, Imagen will create an image that matches your instructions.

The results are impressive too, well at least the results Google has shared are impressive. With other text-to-image AI such as DALL-E, which was developed by OpenAI, images often come out blurred or can look unfinished. There is no evidence of these traits in any of the images Google has shared. This could mean that Google hasn’t released all of the images created by the tool and has cherry-picked the best, or it could mean that, as Google claims, Imagen consistently produces better images than DALL-E.

As great as all this sounds though, Google still concludes that Imagen is not ready for public use. This conclusion stems from the fact that, as mentioned earlier, you need data to train AI tools like Imagen, lots of data. Unfortunately, unless that data has been painstakingly labelled the tool won’t be able to distinguish things like facts from things like biased opinions. This means that a lot of the data used to train these types of AI tools will actually train them to exhibit biases themselves.

Essentially, until Google can figure out a way to neutralize the biases present in the data used to train tools like Imagen, their output is going to contain just as much racism, sexism, and pretty much every other ism that is currently present on the internet. In the case of Imagen itself, a tool that can create fake images that look real, that is a rather dangerous possibility.

If you are interested in this type of thing, you should read about an inclusive update for Google Photos that we covered recently, which will have implications on how Google creates and trains AI tools like Imagen.

Patrick Devaney is a news reporter for Softonic, keeping readers up to date on everything affecting their favorite apps and programs. His beat includes social media apps and sites like Facebook, Instagram, Reddit, Twitter, YouTube, and Snapchat. Patrick also covers antivirus and security issues, web browsers, the full Google suite of apps and programs, and operating systems like Windows, iOS, and Android.

Latest from Patrick Devaney

You may also like

News

NewsNintendo spent a year breaking melons until they found the perfect sound for 'Donkey Kong Bananza'

Read more

News

NewsWe already know which legendary group 'Fortnite' will be dedicated to in the coming months. And you won't see it coming!

Read more

News

NewsColin Farrell's return to 'The Batman 2' will be much smaller than he himself believed

Read more

News

NewsShould Robert Pattinson join the DC Universe as Batman? Fans of 'Peacemaker' are clear about it

Read more

News

News'Pokémon Go' announces one of the most important changes in its history, and you'd better hurry up

Read more

News

NewsSnoop Dogg is afraid to go to the movies because of a kiss in a Pixar movie

Read more